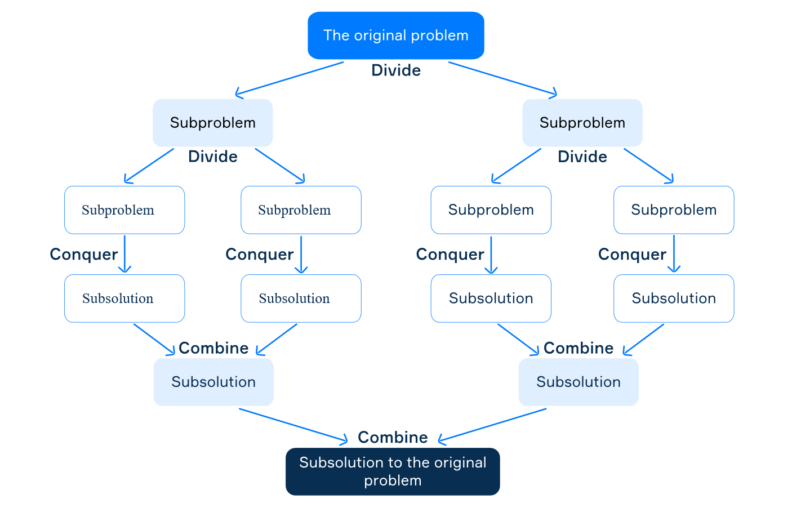

This is still a much better situation than using threads/thread-pools because you are not dividing up based on the optimal thread-count anymore.Īnyways there is nothing like this standardized in C++11, if you want a pure standard library solution without adding third-party dependencies the best you can do is either:Ī. This is a much better situation for nested/divide and conquer/fork-join parallel algorithms.įor (nested) data-parallel algorithms it best to avoid spawning a task per element because typically an operation on a single element the granularity of work is far too small to gain any benefits and outweighed by the overhead of scheduler management so on top of the lower-level work-stealing scheduler you have a higher-level management that deals with dividing up a container into chunks. Typically the number of threads is equal to the number of hardware threads available on the system, so it does not matter so much if you spawn/queue hundreds/thousands tasks (well it does in some cases but depends on the context). Instead of spawning threads or re-using threads from a pool what happens is a "task" (typically a closure + some bookkeeping data) is put onto work-stealing queue(s) to be run at some point by one of X number of worker threads. Nowadays almost all modern parallel frameworks are based on top of a task based work-stealing scheduler, such examples are Intel TBB, Microsoft concurrency run-time (concert)/PPL. Thread-pools can help with the latter but not the former without writing extra code. Using threads directly for writing parallel algorithms, especially divide-and-conquer type algorithms is a bad idea, you will have poor scaling, poor load-balancing and as you know the cost of thread-creation is expensive. This thread limit can be process wide so parallel calls to quicksort will back off co-operatively from creating too many threads. Quicksort(pivot + 1, end, depth+1) // <- HEREĪlternatively to using depth, you can set a global thread limit, and then only create a new thread if the limit hasn't been reached - if it has, than do it sequentially. Void quicksort(IteratorType begin, IteratorType end)Ĭonst IteratorType pivot = partition(begin, end) I wrote a basic implementation of quicksort, and I wanted to boost its performance by parallelizing its execution. If the number of steps the algorithm takes is T ( n ) does not require a real multiplication: we can just pad on the right number of zeros instead.I have been refreshing my memory about sorting algorithms the past few days and I've come across a situation where I can't find what the best solution is.

If start = end: return -1 fi // not foundĮven though we have an iterative algorithm, it's easier to reason about the recursive version. Assumes array is sorted in ascending orderįunction binary-search( value, array A): integer binary-search - returns the index of value in the given array, or // -1 if value cannot be found. We can explicitly remove the tail-calls if our programming language does not do that for us already by turning the argument values passed to the recursive call into assignments, and then looping to the top of the function body again: Note that all recursive calls made are tail-calls, and thus the algorithm is iterative. Return search-inner( value, A, start, mid) Return search-inner( value, A, mid + 1, end) sort - returns a sorted copy of array aįunction sort_iterative(array a): array To accomplish this, we iterate through the array with successively larger "strides". The algorithm works by merging small, sorted subsections of the original array to create larger subsections of the array which are sorted. The iterative version of mergesort is a minor modification to the recursive version - in fact we can reuse the earlier merging function. However, because the recursive version's call tree is logarithmically deep, it does not require much run-time stack space: Even sorting 4 gigs of items would only require 32 call entries on the stack, a very modest amount considering if even each call required 256 bytes on the stack, it would only require 8 kilobytes. Due to a lack of function overhead, iterative algorithms tend to be faster in practice. This merge sort algorithm can be turned into an iterative algorithm by iteratively merging each subsequent pair, then each group of four, et cetera. More precisely, for an array a with indexes 1 through n, if the conditionįor all i, j such that 1 ≤ i = n: result := b j += 1Įlse-if j >= m: result := a i += 1 The problem that merge sort solves is general sorting: given an unordered array of elements that have a total ordering, create an array that has the same elements sorted. 6.3 Summary and Analysis of the 2-D Algorithm.6 Closest Pair: A Divide-and-Conquer Approach.2.1 difficulty in initially correct binary search implementations.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed